Wim Leers (@wimleers) is a 22-year old Computer Science student and is currently finishing a Databases master at Hasselt University in Belgium, where he is now working hard on his master thesis on WPO analytics. He did his bachelor thesis on improving Drupal's page loading performance and wrote the FileConveyor daemon to detect, process and sync files to CDNs or static file servers. He has been contributing to Drupal for almost 4 years, where he is now mostly evangelizing WPO.

Introduction

Web performance monitoring services such as Gomez, Keynote, Webmetrics, Pingdom, Webpagetest (which was also featured in last year’s web performance advent calendar) and recent newcomers such as Yottaa are all examples of synthetic performance monitoring (SPM) tools.

In this article, I will explain the advantages of the opposite approach: real performance monitoring (RPM). This approach also has its disadvantages, but these are swiftly evaporating. The RPM approach will be explained using Steve Souders‘ Episodes.

It will become clear that RPM will win of SPM in the long run. The most important missing link to that end, is a tool to analyze the data collected by RPM. And that is exactly what I’m working on for my master thesis, which is titled “Web Performance Optimization: Analyticsâ€.

Finally, RPM enables very interesting optimizations, such as automatically generating the most optimal CSS and JavaScript bundles.

Synthetic Performance Monitoring

The ‘synthetic’ in SPM is there because performance isn’t being measured from real end users, but instead by the SPM provider’s servers. These simulated users access your site either periodically or randomly. Typically, Internet Explorer 6 or 7 are used, and sometimes Firefox. And all of that in a very controlled environment. That’s hardly a typical end user. It should already be clear that this is not realistic.

That doesn’t mean the data generated by SPM is useless, far from it, it’s still a meaningful reference. But it just doesn’t reflect the real-world page loading performance.

Typically, you record a “test script†that follows a certain path through your web site, and the time it takes to navigate along this path, is how performance is being monitored. This means that if you want to switch to another SPM provider, you will have to record your test scripts again, because they don’t use an interoperable standard.

Real Performance Monitoring

On the other end of the spectrum, there’s RPM, which requires slightly deeper integration with your website, but with great rewards: actual site visitors’ page load times are measured and collected for analysis. This means that we’re able to see exactly how the real end user perceives the loading of the page! (Or at least a very close approximation.)

How is this achieved? By inserting very small pieces of JavaScript at strategically chosen places inside the HTML document: this is called programmatic instrumenting (for non-native speakers: ‘instrumenting’ is “equipping something with measuring instrumentsâ€).

Of course, this instrumentation will typically require a (very) small JavaScript library, and therefore yet another HTTP request, that will have to happen as soon as possible in the document. Plus, it will only be an “as close as possible approximationâ€: it can’t be exact.

This downside will fade away as internet uses move on towards browsers that support the Web Timing spec (which has recently been renamed to Navigation Timing). This API is currently supported by Internet Explorer 9 (since the third platform preview), by Google Chrome 6 and up, most likely it will be supported by the next major version of Safari (because both Chrome and Safari rely on WebKit), but unfortunately support is nowhere to be found in Firefox. Most likely, it won’t even be included in Firefox 4! This is very unfortunate — it will probably slow down adoption of the Web Timing/Navigation Timing spec.

Since this is the only way we can achieve an interoperable, reliable, exact manner to gather performance metrics, this is most unfortunate for the emerging WPO industry.

(If you’re reading this, you’re most likely running the most up-to-date version of your favorite browser. Well then, there are two demos available. Firefox & Safari users will have to switch to either Chrome or the Internet Explorer 9 preview for just a minute.)

Now, if we could standardize on an interoperable RPM standard, then the SPM providers could add support for visualizing and analyzing RPM. Not only would we get more realistic performance numbers, we would also avoid lock-in!

Episodes

The first tool I know of that implements RPM, is the now dead Jiffy. Fairly soon after that, in July 2008, there was Steve Souders’ Episodes (version 1), which was accompanied by an excellent white paper. Episodes is superior because it is a lighter weight implementation.

I strongly recommend reading that white paper, since it covers all aspects of Episodes, including the benefits for all involved parties — it’s written in a very accessible manner, in plain English instead of awful academic English.

Here’s a brief, slightly reformatted excerpt about the goals and vision for Episodes, which highlight why Episodes is a great starting point for RPM:

The goal is to make Episodes the industrywide solution for measuring web page load times. This is possible because Episodes has benefits for all the stakeholders:

- Web developers only need to learn and deploy a single framework.

- Tool developers and web metrics service providers get more accurate timing information by relying on instrumentation inserted by the developer of the web page.

- Browser developers gain insight into what is happening in the web page by relying on the context relayed by Episodes.

Most importantly, users benefit by the adoption of Episodes. They get a browser that can better inform them of the web page’s status for Web 2.0 apps. Since Episodes is a lighter weight design than other instrumentation frameworks, users get faster pages. As Episodes makes it easier for web developers to shine a light on performance issues, the end result is an Internet experience that is faster for everyone.

About a month ago, Steve released Episodes version 2, for which the source code is now available (Apache license 2.0). Some problems have been fixed and most notably, the page load start time is now retrieved using the Web Timing API whenever available, or even the Google Toolbar when it is installed (both offer increased accuracy).

Please join us at the Episodes Google Group, to help move Episodes forward!

Boomerang

While it is not entirely comparable with Episodes, Yahoo’s Boomerang, released in June 2010, is also very interesting. It’s less focused on measuring the various stages of loading a web page: it also has the ability to estimate the latency from the end user to the web server (fairly accurately) and the throughput (the author calls this “bandwidthâ€, but that’s wrong — these two terms are incorrectly being used interchangeably by most people nowadays, probably due to broadband internet providers’ marketing).

It would be nice to see latency and throughput estimation become an optional part of Episodes. Boomerang’s other features can be classified as duplicate functionality of Episodes. We should standardize on a single library, or at least ensure interoperability.

Drupal Episodes module

This section shows how one can take advantage of a framework’s existing infrastructure to solidly and rapidly integrate Episodes.

As part of my bachelor thesis, I wrote the Episodes module for Drupal, which automatically integrates Episodes with Drupal.

In Drupal, each piece of JavaScript that adds a particular “behavior†is defined as a property of Drupal.behaviors. Each behavior will then be called when the DOM has loaded, but also when new DOM elements are added to the page via AJAX callbacks. All one has to do, is call Drupal.attachBehaviors() after the new content has been added to the document.

Then the obvious way to integrate Episodes with Drupal is overriding Drupal.attachBehaviors() so that it uses Episodes’ mark and measure system for each Drupal behavior. Thus Drupal’s JavaScript abstraction layer in combination with Episodes, allows us to detect slow JavaScript code! And not any specific JavaScript code, quite the opposite: it works for any Drupal module’s JavaScript code. Very little effort for a lot of gain!

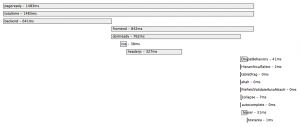

Here’s a screenshot of the Firebug add-on:

The brief episodes in the bottom right corner are Drupal JavaScript behaviors, that are converted into Episodes automatically.

WPO Analytics

Timings collected by Episodes (and similarly by Boomerang) are sent to a beacon URL, in the query string. The web server that serves this beacon URL then logs the requested URL in its log file. It’s this log file that you can now use to analyze the data collected by Episodes.

Because once you’ve collected the timing data, you cannot magically improve the performance of your website. You have to understand it, interpret it, to find causes of performance problems. Ideally, there would be a tool that is smart enough to automatically pinpoint causes of slow page loads for you:

- “

http://example.com/fancy-landing-page‘sCSSepisode is slow†— detect slow episodes - “

http://example.com‘sJavaScriptepisode is slow for visitors that use the browser Internet Explorer 6 or 7†— detect browser-specific problems - “

http://example.com/some-pageis slow in Belgium†— detect geographical problems - “

http://example.com/gallery‘sloadCarouselepisode is slow in Belgium for visitors that use the browser Firefox 3†— detect complex problems - …

That’s exactly what I’m trying to achieve with my master thesis (in case you’re interested: I’ve already completed the literature study). The goal is to build something similar to Google Analytics, but then for page loading performance (WPO), instead of just page loads. I’m using advanced data mining techniques (actually, data stream mining and anomaly detection) to find patterns in the data (i.e. generate pintpoint causes of slow page loads, like the examples above) as well as OLAP techniques (data cube) to be able to quickly browse the collected data, to also allow for human analysis.

All code and documents are publicly available.

Automated optimal CSS/JavaScript bundling

Another interesting possibility is this: what if we let Episodes also log which CSS and JavaScript files were used on each page (even when they’re bundled)? Because Episodes logs the page load times of each page, we can then also calculate which CSS and JavaScript bundles (i.e. bundling multiple CSS/JavaScript files into a single file) would be most optimal, that is, which bundles would yield the best balance of minimal number of HTTP requests versus maximal cacheability.

Most frameworks that support CSS and JavaScript bundling today (including Drupal), follow a simple bundling approach that works, but is far from optimal: they just bundle each unique combination of files into a single file. An example:

- page A needs

x.css,y.cssandz.css; these are combined intoxyz.css - page B needs

x.cssandy.css; these are combined into xy.css

When a user now visits page A, he downloads xyz.css. If he subsequently visits page B, he still has to download xy.css. Because the framework wasn’t smart enough to just use xyz.css. More complex examples are possible where the solution isn’t this simple (e.g. if page B also has a w.css file), but it’s not hard to see the problem at hand. A better solution in this case would have been to let page A serve xy.css and z.css, and page B w.css and xy.css. Then xy.css would already be in the user’s browser cache, and one less request would have been necessary.

By using Episodes’ log data, we could automatically calculate the most optimal bundles!

Conclusion

Much work is left to be done in the field of Web Performance Optimization. One of the most interesting and promising new developments, is the widespread integration of Real Performance Monitoring — preferably by developing an industry standard, likely by starting from the Web Timing spec and the Episodes library. What is still badly needed, is a WPO analytics tool that provides easy (and as much automated as possible) insight in the data collected by RPM.

I hope that this article will have piqued the interest of at least of some of the readers, and hopefully start contributing in the exciting and rewarding field of WPO. I welcome you in the Episodes Google Group or in the comments of my “WPO Analytics†master thesis blog posts!