Secrets of High Performance Native Mobile Applications

10thDec 2011 by Israel Nir

Israel Nir (@shunra) likes to create stuff, break other stuff apart, code, the number 0x17 and playing the ukulele. He also works as a team leader at Shunra, where he builds tools to make applications run faster.

Since Steve Souders published his seminal book “High Performance Web Sites” four years ago, the world has changed considerably. Web sites became faster, browsers significantly improved and users started to expect top performance. During these four years, a new category of client-facing applications was born, which currently receives little attention from the performance community – native mobile applications. These applications have their own set of challenges and opportunities. Luckily, they also have a lot in common with good old web applications. One thing’s for certain, users expect native apps to perform as fast, if not faster, than web sites. With the Christmas rush in full swing, users are bound to be even less tolerant of poorly performing apps, so I figured it’s a good time to see how the top sellers’ mobile apps perform, and at the same time, also make a dent in my holiday gift list.

What are the two factors that most affect app performance? I’m not going to discuss native code tweaks, since this is predominantly platform-dependent and will probably put most of you to sleep. So let’s focus on mobile performance tuning – improving the application’s behavior over the network. The importance of network utilization is even greater considering the kind of network conditions these apps are most likely to encounter, such as high latency and low bandwidth.

In order to analyze a mobile app’s network traffic, you can start by setting up an ad-hoc WiFi network on a computer, connect your mobile device to that network and run a packet capture on the computer. Then use an application such as Wireshark to examine the traffic generated by your application, or load the packet capture into a tool like PcapPerf. Another option is to use a proxy, such as Charles Proxy of Fiddler, but please be aware that it may impact your app’s network behavior, such as limiting the number of concurrent connections. Personally I use my company’s tools (Shunra vCat with Analytics) to capture and analyze the app’s traffic. These tools also enable me to emulate mobile networks, so it’s easier for me to detect problems that may only manifest on various mobile networks, such as 3G.

Keep an eye on your waterfalls

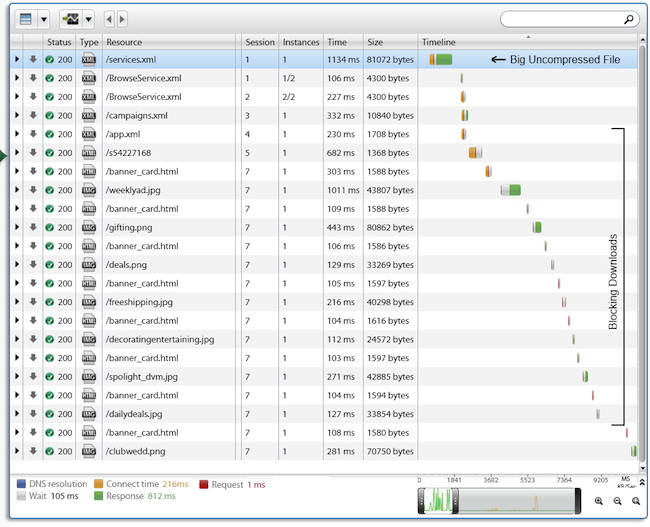

Time to start some serious shopping, so let’s look at one of the major mobile retail players. Starting with Mom, the world traveller, I thought a new luggage set would be appreciated. Lots of choices here – now what’s her favorite color? I had lots of time to ponder this question, because this retailer’s iPhone app takes quite a while to load. Examination of the HTTP waterfall reveals a long daisy chain of resources blocking each other, lasting for 7.5 seconds. Notice that in this case, images are blocking parallel downloads, which is something you won’t typically see in a web app.

While web developers can enable parallel downloads with a few simple tweaks and put their trust in browser makers, it’s up to the native app developer to come up with the optimal concurrent download scheme. Our research shows that even on mobile networks you can obtain a performance gain using up to four parallel downloads, and advanced users can switch to HTTP pipelining to acquire another speed boost.

Compress those resources

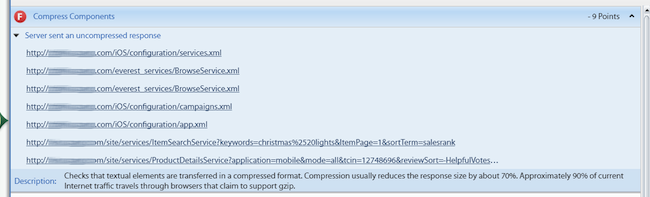

In the waterfall above, you may notice that the first resource, services.xml is 81KB long and takes more than a second to fetch over the network (blocking any other resources following it). Of that second, 812ms are spent just downloading the file. Looking at the response headers one can see that it was sent uncompressed. If it were compressed, it would have weighted only 6KB, saving at least half a second in response time. Obviously, it’s not the only resource sent uncompressed using this app.

Don’t download the same content twice

This should be a no brainer, but we have observed this performance anti-pattern in so many Android and iPhone apps that it’s worth pointing out. When implementing a native app, it’s the developer’s responsibility to implement a basic caching mechanism. Just setting the caching-headers of http responses is usually not enough. Here’s what happened when I was looking for a baby-gift using the iPhone app of an e-commerce site known for its handmade items:

Cute baby, but the same image was downloaded three times, and this was typical for many other images that were also downloaded multiple times. Moreover, some images downloaded more than one instance in the same TCP session. Creating a basic caching layer, one that caches elements in memory as long as the application is running, is not that complicated. It greatly improves performance and highlights your professionalism.

Can too much Adriana Lima slow you down?

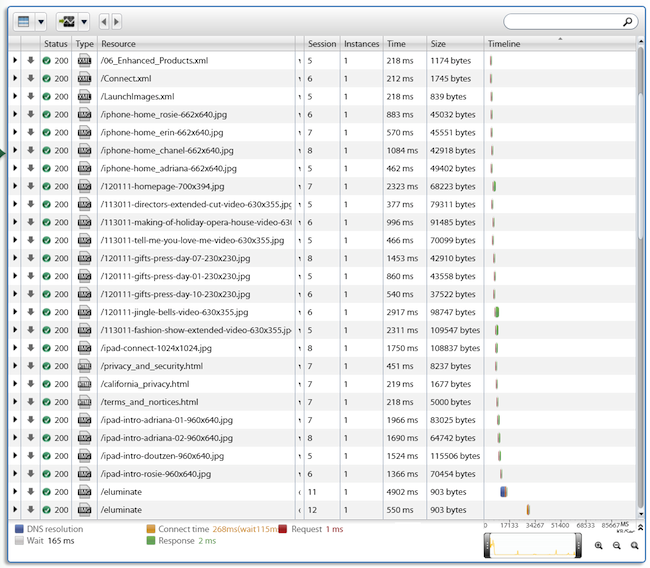

Tired of looking for the usual Christmas presents, I launched a famous lingerie retailer’s app, looking for, hmmm, stockings to put in my girlfriend’s Christmas stocking. Though I enjoy looking at Adriana Lima as much as the next guy, downloading huge images of her and the other VS models was actually quite painful. Surprisingly, though I was using an iPhone, I was getting both iPhone and iPad versions of the images. The iPad images were obviously not optimized for my small screen, and amounted to half a megabyte of wasted traffic. Although this might be ok over a wired network, it’s exasperating on a mobile.

During the past year we have encountered many applications that exhibit similar performance faux-pas. Hipmunk, the hip flight search application, downloaded a big data file (650KB after compression), containing the entire search results in one chunk. It would have been better to split that file into several smaller files, some of which could be downloaded asynchronously. Other applications download many very small files that could be easily combined into fewer larger files to circumvent a performance hit due to the high latency in mobile networks.

Epilogue

This is just a short sample of performance best-practices for native mobile apps, indicating that some of the principals of well-performing native apps and web sites are not that different. Eliminate unnecessary downloads (with respect to both the number of bytes and the number of requests), and manage the rest to make good use of the network by leveraging parallelization and asynchronous downloads. While with web sites you relegate many of those tasks to the browser, with native apps it’s mostly up to you. The room for performance tweaks is much larger, but so is the room for mistakes. Thus, if there’s one important takeaway, it’s to always test your apps early and never leave performance to chance.