Brian Pane (@brianpane) is an Internet technology and product generalist. He has worked at companies including Disney, CNET, F5, and Facebook; and all along the way he's jumped at any opportunity to make software faster.

Introduction: falling down the stairs

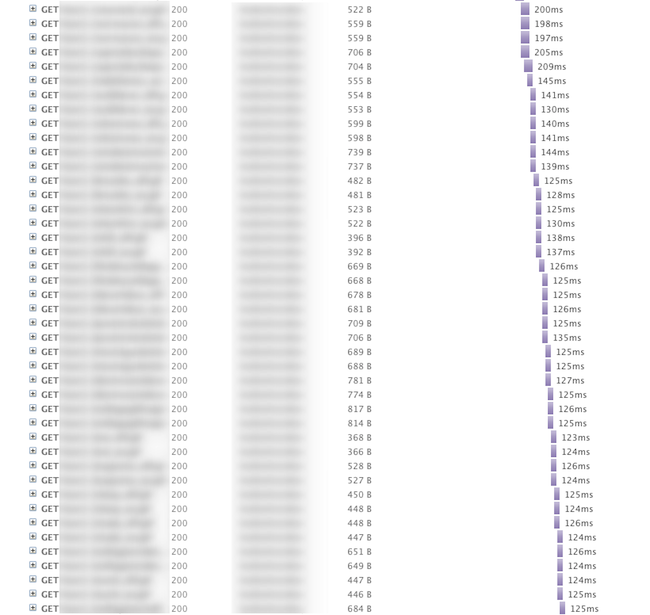

The following image is part of a waterfall diagram showing the HTTP requests that an IE8 browser performed to download the graphics on the home page of an e-commerce website.

(Note: The site name and URLs are blurred to conceal the site’s identity. It would be unfair to single out one site by name as an example of poor performance when, as we’ll see later, so many others suffer the same problem.)

The stair-step pattern seen in this waterfall sample shows several noteworthy things:

- The client used six concurrent, persistent connections per server hostname, a typical configuration among modern desktop browsers.

- On each of these connections, the browser issued HTTP requests serially: it waited for a response to each request before sending the next request.

- All of the requests in this sequence were independent of each other; the image URLs were specified in a CSS file loaded earlier in the waterfall. Thus, significantly, it would be valid for a client to download all these images in parallel.

- The round-trip time (RTT) between the client and server was approximately 125ms. Thus many of these requests for small objects took just over 1 RTT. The elapsed time the browser spent downloading all N of the small images on the page was very close to (N * RTT / 6), demonstrating that the download time was largely a function of the number of HTTP requests (divided by six thanks to the browser’s use of multiple connections).

- The amount of response data was quite small: a total of 25KB in about 1 second during this part of the waterfall, for an average throughput of under 0.25 Mb/s. The client in this test run had several Mb/s of downstream network bandwidth, so the serialization of requests resulted in inefficient utilization of the available bandwidth.

Current best practices: working around HTTP

There are several well-established techniques for avoiding this stair-step pattern and its (N * RTT / 6) elapsed time. Besides using CDNs to reduce the RTT and client-side caching to reduce the effective value of N, the website developer can apply several content optimizations:

- Sprite the images.

- Inline the images as data: URIs in a stylesheet.

- If some of the images happen to be gradients or rounded corners, use CSS3 features to eliminate the need for those images altogether.

- Apply domain sharding to increase the denominator of (N * RTT / 6) by a small constant factor.

Although these content optimizations are well known, examples like the waterfall above show that they are not always applied. In the author’s experience, even performance-conscious organizations sometimes launch slow websites, because speed is just one of many priorities competing for limited development time.

Thus an interesting question is: how well has the average website avoided the stair-step HTTP request serialization pattern?

An experiment: mining the HTTP Archive

The HTTP Archive is a database containing detailed records of the HTTP requests–including timing data with 1ms resolution–that a real browser issued when downloading the home pages of tens of thousands of websites from the Alexa worldwide top sites list.

With this data set, we can find serialized sequences of requests in each webpage. The first step is to download each page’s HAR file from the HTTP Archive. This file contains a list of the HTTP requests for the page, and we can find serialized sequences of requests based on a simple, heuristic definition:

- All the HTTP requests in the serialized sequence must be GETs for the same scheme:host:port.

- Each HTTP transaction except the first must begin immediately upon the completion of some other transaction in the sequence (within the 1ms resolution of the available timing data).

- Each transaction except the last must have an HTTP response status of 2xx.

- Each transaction except the last must have a response content-type of image/png, image/gif, or image/jpeg.

This definition captures the concept of a set of HTTP requests that are run sequentially because the browser lacks a way to run them in parallel, rather than because of content interdependencies among the requested resources. The definition errs on the side of caution by excluding non-image requests, on the grounds that a JavaScript, CSS, or SWF file might be a prerequisite for any request that follows. In the discussion that follows, we err slightly on the side of optimism by assuming that the browser knew the URLs of all the images in a serialized sequence at the beginning of the sequence.

Results: serialization abounds

This histogram shows the distribution of the longest serialized request sequences per page among 49,854 web pages from the HTTP Archive’s December 1, 2011 data set:

In approximately 3% of the webpages in this survey, there is no serialization of requests (i.e., the longest serialized request length is one). From a request parallelization perspective, these pages already are quite well optimized.

In the next 30% of the webpages, the longest serialized request sequence has a length of two or three. These pages might benefit modestly from increased request parallelization, and a simple approach like domain sharding would suffice.

The remaining two thirds of the webpages have serialized request sequences of length 4 or greater. While content optimizations could improve the request parallelization of these pages, the fact that so many sites have so much serialization suggests that the barriers to content optimization are nontrivial.

Recommendations: time to fix the protocols

One way to speed up websites without content optimization would be through more widespread implementation of HTTP request pipelining. HTTP/1.1 has supported pipelining since RFC 2068, but most desktop browsers have not implemented the feature due to concerns about broken proxies that mishandle pipelined requests. In addition, head-of-queue blocking is a nontrivial problem; recent efforts have focused on ways for the server to give the clients hints about what resources are safe to pipeline. Mobile browsers, however, are beginning to use pipelining more commonly.

Another approach is to introduce a multiplexing session layer beneath HTTP, so that the client can issue requests in parallel. An example of this strategy is SPDY, supported currently in Chrome and soon in Firefox.

Whether through pipelining or multiplexing, it appears worthwhile for the industry to pursue protocol-level solutions to increase HTTP request parallelization.