Steve is Chief Performance Officer at Fastly developing web performance services. He previously served as Google's Head Performance Engineer and Chief Performance Yahoo!. Prior to that Steve worked at General Magic, WhoWhere?, and Lycos, and co-founded Helix Systems and CoolSync. Steve is the author of High Performance Web Sites and Even Faster Web Sites. He is the creator of many performance tools and services including YSlow, the HTTP Archive, Cuzillion, Jdrop, ControlJS, and Browserscope. He serves as co-chair of Velocity, the web performance and operations conference from O'Reilly, and is co-founder of the Firebug Working Group. He taught CS193H: High Performance Web Sites at Stanford.

At lunch this week, Stoyan and I were discussing Facebook’s async loading pattern. It looks like this:

(function(d, s, id){

var js, fjs = d.getElementsByTagName(s)[0];

if (d.getElementById(id)) {return;}

js = d.createElement(s); js.id = id;

js.src = "//connect.facebook.net/en_US/sdk.js";

fjs.parentNode.insertBefore(js, fjs);

}(document, 'script', 'facebook-jssdk'));

This is the common async pattern of creating a SCRIPT element, setting its SRC, and adding it to the DOM. But Stoyan pointed out an optimization that I had never seen before (highlighted in bold above): if the async SCRIPT element already exists, then bail. This is easily done by giving the SCRIPT element an ID, and checking for that ID on subsequent calls.

The motivation for this optimization is to avoid the performance costs of loading a script multiple times. Duplicate scripts occur often, especially for 3rd party widgets (such as the Like, +1, and Tweet buttons) that might get copy-and-pasted multiple times in a single page.

It got me wondering:

- What are the performance costs of duplicate scripts?

- How often does it happen?

- How can the performance penalty be avoided?

Performance Cost of Duplicate Scripts

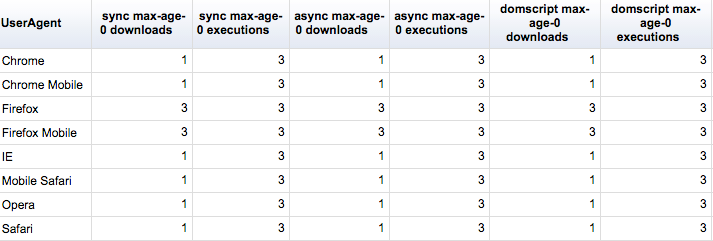

External scripts make pages slower when they are downloaded and executed. In order to test the performance cost of duplicate scripts, the Duplicate Scripts test page includes the same script three times, and measures the number of times the script is downloaded and the number of times it’s executed.

It’s possible that the performance costs may vary depending on how the script is inserted, so three techniques are used:

- sync – The script is inserted using HTML:Â

<script src="">. - async – The ASYNC attribute is added to the HTML:

<script src="" async>. - domscript – The script is inserted by creating a SCRIPT element, setting its SRC, and inserting it into the DOM.

The Cache-Control header is also tested using these four conditions:

- max-age=600 – The script is cached for 10 minutes.

- max-age=0 – The script is cached for 0Â seconds.

- max-age=0, no-store, no-cache, must-revalidate – The script is aggressively set to be uncacheable.

- none – No Cache-Control header is specified, allowing the browser to fallback to its heuristic cache lifetime.

These two variables produce 12 different test pages. The final variable is the browser itself – different browsers have different behaviors. In order to get results across multiple browsers, I created a Browserscope user test and tweeted asking the community for help, culminating in results gathered from over fifty browsers.

It’s important for widget developers and website owners to keep these takeaways in mind: If a script is included multiple times, it gets executed over and over again. In addition, Firefox will download the script multiple times unless the script is explicitly cached.

Common or Atypical?

Stoyan’s optimization for the Facebook widget suddenly makes a lot of sense. Their script’s Cache-Control header contains “max-age=1200”, so they don’t have to worry about multiple downloads in Firefox. And the check to make sure the script is never inserted more than once (if (d.getElementById(id)) {return;}), ensures that it is only executed once regardless of how many times the snippet is pasted into the page. Their sdk.js script contains 154K of JavaScript, so it improves page performance to avoid parsing and executing the script multiple times.

But is this an abstract, academic problem? Or are there actual pages that include duplicate scripts with bad caching headers and significant execution times?

To answer these questions, we turn to the HTTP Archive, a project that gathers performance information for the world’s top 300K websites. Ilya Grigorik‘s companion site, BigQueri.es, provides a forum for sharing custom queries over the HTTP Archive data that he’s uploaded to BigQuery. I first ran a query to find the most popular scripts across all websites for crawl date August 15 2014.

The reason I targeted August 15 2014 is that Ilya recently added a new HTTP Archive table to BigQuery that contains all of the text response bodies from the crawl (~6M responses totaling ~300GB). This is still an experimental feature so he’s not (yet) creating the table for every crawl, so the most recent one is from August 15 2014. With this new table, we can see if any of these scripts are included multiple times in a single page.

For example, this BigQueri.es article describes how to find pages that include plusone.js multiple times. Across the world’s top 300K URLs, there are 5573 (~2%) that include plusone.js more than once. iGadgetware loads plusone.js over 20 times. Even mainstream Google sites like the Official Google Blog, the Official Google Webmaster Central Blog, and the Inside Adsense Blog load plusone.js three or more times. The script has max-age=1800, so it won’t be downloaded multiple times in Firefox. But it has 36K of JavaScript, so parsing and executing multiple times hurts page performance.

Reusing the same query but searching for “twitter.com/widgets.js” shows 2567 (~1%) of HTML documents include it more than once. The Official Ryan Seacrest website loads widgets.js 288 times! But that’s okay because, similar to Facebook, the Twitter async snippet does a quick exit if the script has already been inserted:

window.twttr=(function(d,s,id){

var t,js,fjs=d.getElementsByTagName(s)[0];

if(d.getElementById(id)){return}

js=d.createElement(s);js.id=id;js.src="https://platform.twitter.com/widgets.js";

fjs.parentNode.insertBefore(js,fjs);

return window.twttr||(t={_e:[],ready:function(f){t._e.push(f)}})

}(document,"script","twitter-wjs"));

Unfortunately, other sites like extremetech.com and toprankblog.com load widgets.js more than ten times using the synchronous pattern, so its 108K of JavaScript is parsed and executed more than ten times.

The third most popular script is osd.js from Google Ads. After some investigation I saw that this gets loaded by gpt.js. Reusing the same query for “gpt.js” found 792 sites that include it more than once. Oh Sweet Basil loads it 14 times using synchronous markup. Weighing in at 45K, that’s a lot of JavaScript to execute 14 times. Zvents loads it five times using an async pattern, but there’s no quick bail so gpt.js gets parsed and executed five times. SalesSpider, on the other hand, loads it five times but has a quick exit that looks like they crafted themselves. Bravo!

Getting back to Facebook, let’s look for “//connect.facebook.net/en_US/all.js” since it’s more popular than “sdk.js”. The HTTP Archive query shows there are 1489 sites that load all.js more than once. Cuckoo for Coupon Deals loads it ten times, but since it uses the async snippet with the quick exit, the script is only loaded & executed once. In fact, every website I checked that includes all.js uses the Facebook async pattern with the quick exit, avoiding the performance pitfalls of duplicate scripts.

Avoid the Pain

Including a script multiple times does hurt performance – the script is executed multiple times and may even be downloaded multiple times in Firefox. This isn’t an academic problem. Duplicate scripts happen frequently in real websites.

Website owners can avoid this performance problem by removing duplicate scripts. That’s sometimes easier said than done. There might be multiple development teams contributing code to the page. Or a third party snippet might get copy-and-pasted multiple times.

Snippet developers can help solve the problem by following Facebook and Twitter’s optimization of ensuring that the async script element is only created once. This avoids the needless re-execution of the same JavaScript over and over again. It’s also important that the script be cacheable for non-zero seconds to avoid multiple downloads in Firefox.