[TL:DR]: This post quickly gives a way to measure the “ad weight” rather than “page weight” which is well-known. This is important consideration, given that it represents the bytes attributable to revenue.

Background & Motivation

The web performance community knows a lot of page weight with a lot of tooling around it. For media sites whose revenue is generated by monetizing the end user’s attention via advertisements, its important to track the weight of ad related code within the page. So far, I have not seen any specific tools which measure this metric and hence this post delves into a quick and dirty way of coming up with this number for any given web page.

The motivation here is that excessive ad weight hurts user experience driving away users thus decreasing the value of ads placed on the site (because advertisers care about reach and if websites lose users they lose reach and hence this factors into the value of an ad). Since ad weight is subset of page weight the standard concerns for page weight also apply here for ads.

Ad Tags

An ad server is a web-based tool used by publishers, networks, and advertisers to help with ad management, campaign management, and ad trafficking. An ad server also provides reporting on ads served on the website. Finally, an ad server serves the creative side; this means that the ad server or ad serving company also delivers the ad to each user’s browser. The client side component to this server is a tag manager or a tag container. Most publishers these days use DoubleClick for Publishers (DFP) of AppNexus. The client side component usually is an ad tagging library for an ad server that can dynamically build ad requests. The most common one used for Google DFP is called Google Publisher Tag (GPT) For this article we will focus on GPT as they are have the majority market share among the web publishers. The same can apply to AppNexus Seller Tag(AST) if you are using AppNexus.

Manual Method

Now that we understand where ads are coming from a simple method to look at “ad weight” is to test the page weight with regular ad load and load the page without any ads to compute the ad weight as difference in the page weight between these two loads.

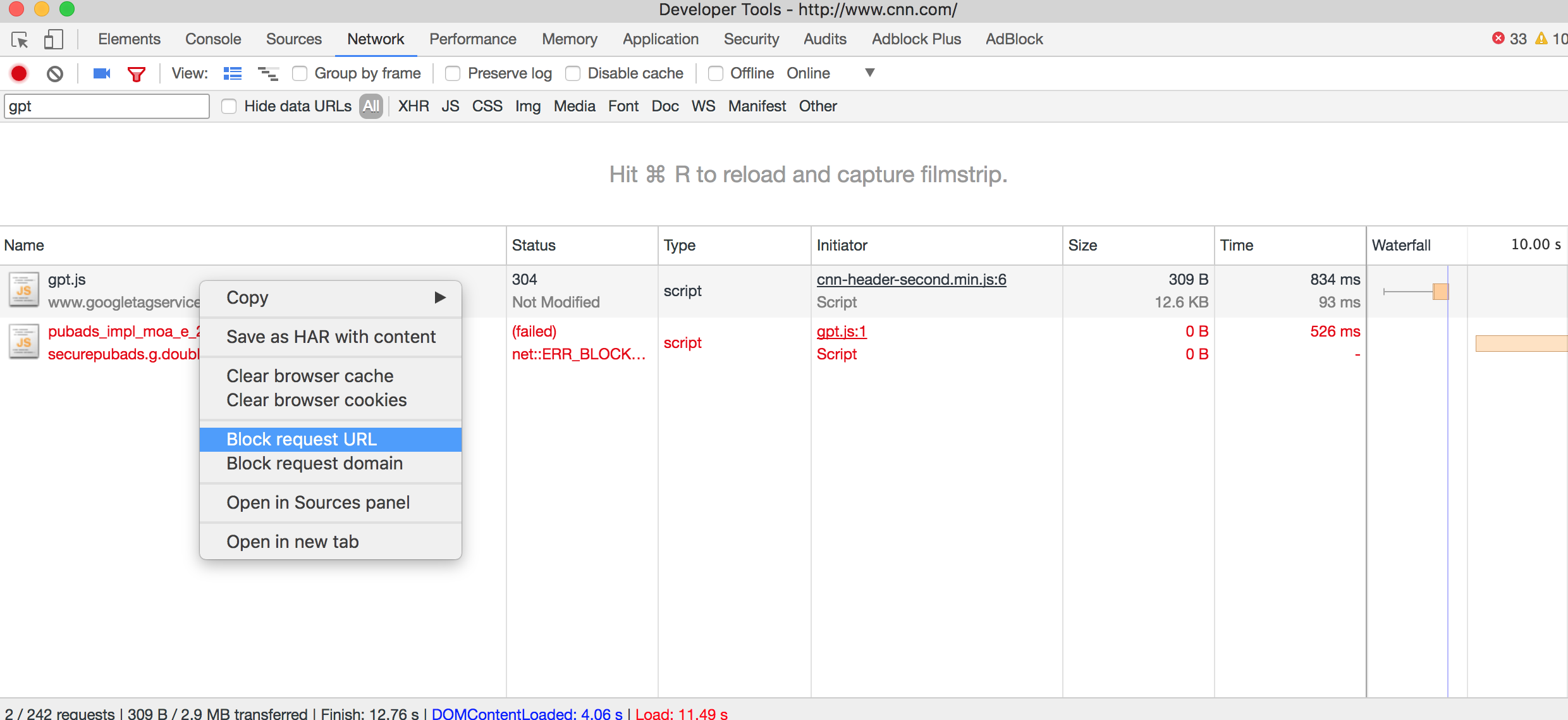

A manual way to do this is to look at developer tools for the page weight and then block the ad tag by using “request blocking” to block gpt (http://www.googletagservices.com/tag/js/gpt.js) because all ad code emanates from the execution of this script.

Alternatively you can use your favorite adblocker to load the page and take note of its page weight before and after using an adblocker.

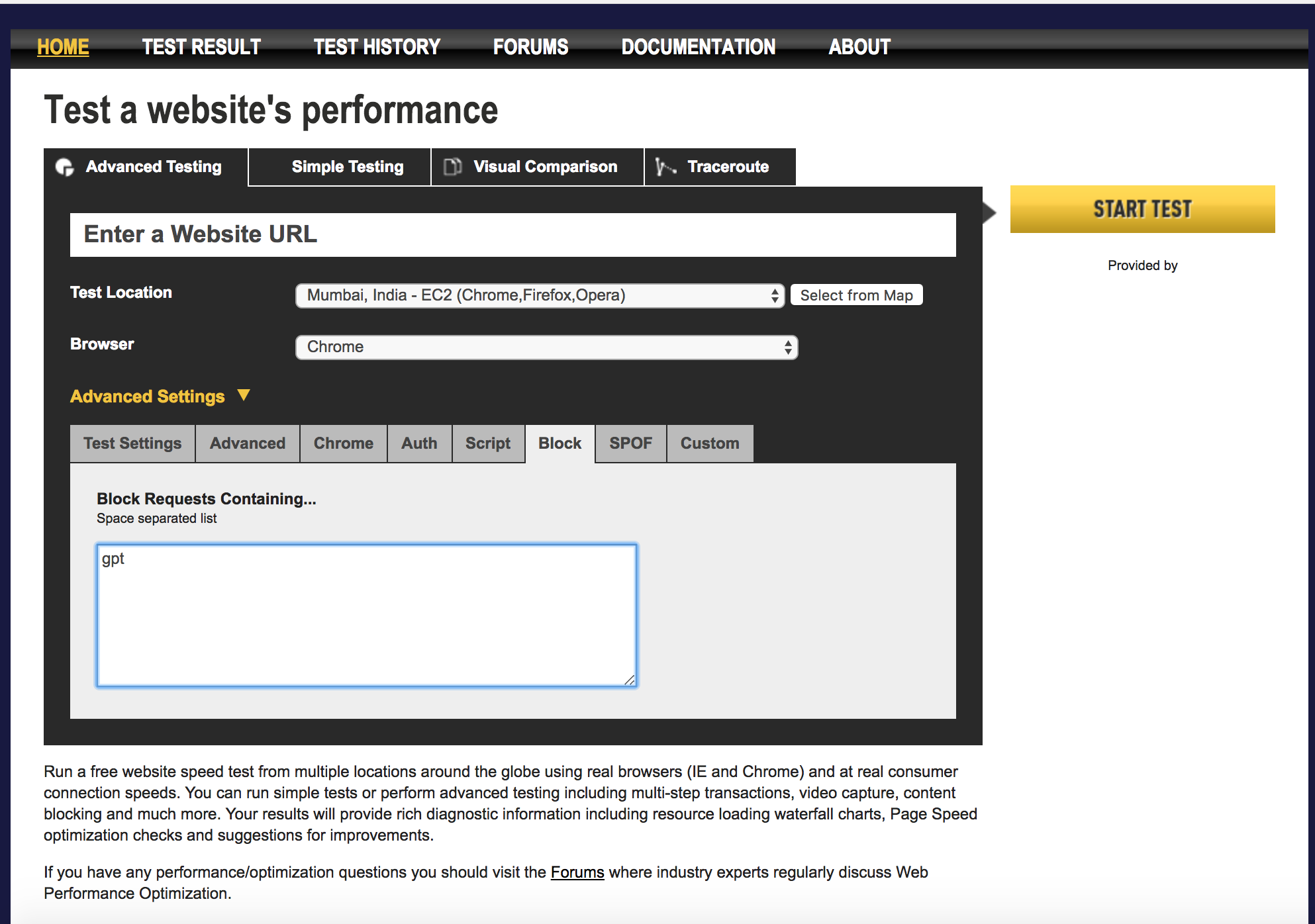

So far I have shown you manual ways of calculating ad weight on your device but we need a shareable way of doing the same. So lets take our favorite tool WPT to determine the same. Run your page using normal WebPagetest but for removal ads you need to block requests containing the substring “gpt”.

The WPT run with blocking “gpt” has the same effect of running WPT with an adblocker for all publishers utilizing Google DFP. So, for example, you can see that the ad weight of nytimes.com it is around 1.1 MB in ads alone.

Putting it all together

Now that we know the method lets automate it in a quick and dirty way using the WPT API. You would need a WPT API key which can generated here.

Now simply run the following script supplying the URL of your choice as the argument to the script.

#!/usr/bin/python

import requests

import json

import time

import sys

api_key = '<your_api_key>'

wpt_server = 'http://www.webpagetest.org'

url = sys.argv[1]

def hbytes(num):

for x in ['bytes', 'KB', 'MB', 'GB']:

if num < 1024.0:

return '%3.1f%s' % (num, x)

num /= 1024.0

return '%3.1f%s' % (num, 'TB')

try:

r1 = \

requests.post('{0}/runtest.php?k={1}&url={2}&fvonly=1&location=Dulles.Native&video=1&f=json&label=regular&r=1234'.format(wpt_server,api_key,

url))

r2 = \

requests.post('{0}/runtest.php?k={1}&url={2}&fvonly=1&location=Dulles.Native&block=gpt&video=1&label=adblocked&f=json&r=4321'.format(wpt_server,api_key,

url))

regular = json.loads(r1.content)

adb = json.loads(r2.content)

url_prefix = \

'http://www.webpagetest.org/video/compare.php?tests={0},{1}'.format(regular['data'

]['testId'], adb['data']['testId'])

print url_prefix

ctr = 0

r = json.loads(requests.get(regular['data']['jsonUrl']).content)

a = json.loads(requests.get(adb['data']['jsonUrl']).content)

while ctr < 33:

r = json.loads(requests.get(regular['data']['jsonUrl']).content)

a = json.loads(requests.get(adb['data']['jsonUrl']).content)

ctr = ctr + 1

print r['statusText'], a['statusText']

if r['statusCode'] == 200 and a['statusCode'] == 200:

break

time.sleep(30)

diff = int(r['data']['runs']['1']['firstView']['bytesIn']) \

- int(a['data']['runs']['1']['firstView']['bytesIn'])

print '%s:%s ' % (url, hbytes(diff))

except requests.ConnectionError, e:

raise WPTException('Unable to connect to WPT Rest API! Error: {0}'.format(e),

r.status_code)

Results

The above code constructs two URLs (one normal and one blocking ads), submits the two URLs to WebPagetest Dulles for single run with native speed and collects the results waiting for the test to finish and then calculates the delta between them.

python adWeight.py http://www.sfgate.com http://www.webpagetest.org/video/compare.php?tests=171222_B3_a0d203fc64e42f5beaedbc0a7e337784,171222_8T_16f7abcac5e8967da0e5ae2fbda79495 Waiting behind 12 other tests... Waiting behind 13 other tests... Test Started 15 seconds ago Test Started 13 seconds ago Test Started 39 seconds ago Test Complete Test Started 1 minute ago Test Complete Test Complete Test Complete http://www.sfgate.com:3.2MB

As you can see the above code first spits out a test URL that you can fire up in a browser to generate video, compare waterfalls etc. If there is a wait it loops printing out the test status just like the WPT UI and finally gathers the results which shows that sfgate.com on a single page load spends 3.2MB just for advertisement related code.

Go ahead and try it on your favorite websites to see how much of the page weight can be attributed to ads.

Discussion

This post gave a crude way to get a sense of "ad weight". By definition, ads are highly targeted so vary by user, geography, time of day, cookie, etc and hence a statistical aggregate across page loads need to be generated for a sense of "ad weight". One way to generate this is by using RUM for ads (does not exist yet!) so that we can pinpoint exactly how many bytes are spent in the ad part versus the editorials. In fact publishers should have a budget for ad weight and make sure the ad weight never exceeds more than 20% of the page weight and use perf budget process to audit this usage and prune ad code/networks that bloat up the page.