Tim Vereecke (@TimVereecke) loves speeding up websites and likes to understand the technical and business aspects of WebPerf since 15+ years. He is a web performance architect at Akamai and also runs scalemates.com: the largest (and fastest) scale modeling website on the planet.

Redirects are notoriously bad for performance. This article explains how to remove redirect penalties using a new technique called Redirect Liquidation.

In a nutshell

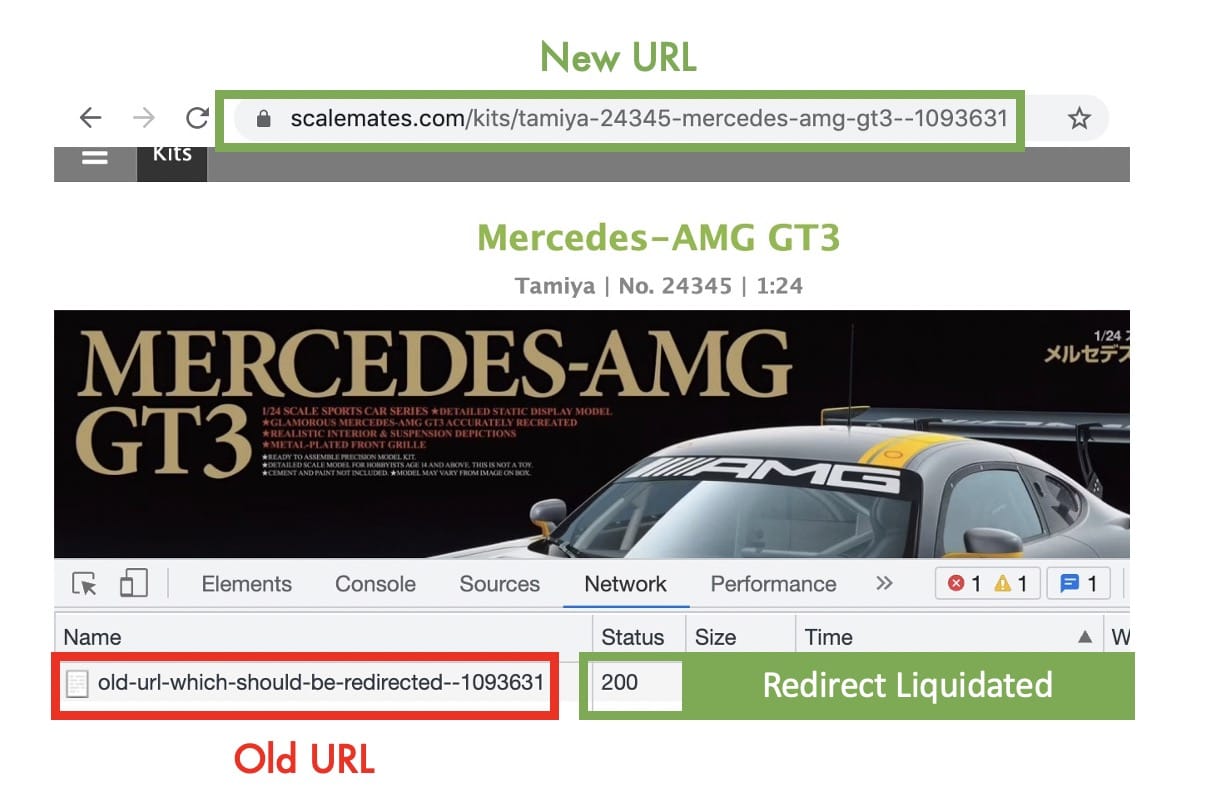

Instead of returning a 301/302/307 status code when an old URL is requested; You directly serve the new content and inject a <script> in your HTML to update the Location Bar.

...

<script type=javascript>

history.replaceState(null, '', '/new/url.html');

</script>

...

The injected <script> updates the requested old URL to the new URL in the Location bar without triggering a redirect. The implemented method history.replaceState is part of the History API: a well-supported API with an adoption rate of 96%+ at the end of 2021.

Implementation

Redirect Liquidation can be implemented at your Origin, at the Edge (Source code and further Edge instructions on GitHub: RedirectLiquidator Akamai EdgeWorker) or in a mixed mode.

This feature should not be enabled for bots (eg. Google Crawler). When a bot crawls an outdated link they must receive the actual 301/302/307 response. This assures legacy URL’s in the index are updated with the new ones.

Based on your toolset this can be done based using User Agent matching or a more advanced Bot Manager solution from your CDN.

Results RUM

While tuning redirects I focus on 2 KPIs in my Real User Monitoring (RUM) data:

- Speed: How fast are my redirects and can I make them faster?

- Frequency: How often do redirects happen and can I avoid them?

Redirect Liquidation targets the second KPI: reducing the frequency of redirects.

This mPulse RUM graph shows the amount of pages having a redirect penalty over time. In the last 4 days a significant drop is visible; This is when this technique was fully rolled out on my website.

The graph also shows that not all redirects have been removed; Redirect Liquidation only works on redirects which you control, which are on the same domain and have the same protocol.

The remaining redirects can be classified in these 3 buckets:

- Redirects from

domain.comtowww.domain.com - Redirects from

http://tohttps:// - 3rd-party links typically have tracking redirects happening before hitting your domain

Note: The target is reducing the frequency of redirects resulting in a Time To First Byte (TTFB) improvement. Whether your Redirect performance also improves depends on the speed of the remaining redirects, they can either be faster or slower than the liquidated ones.

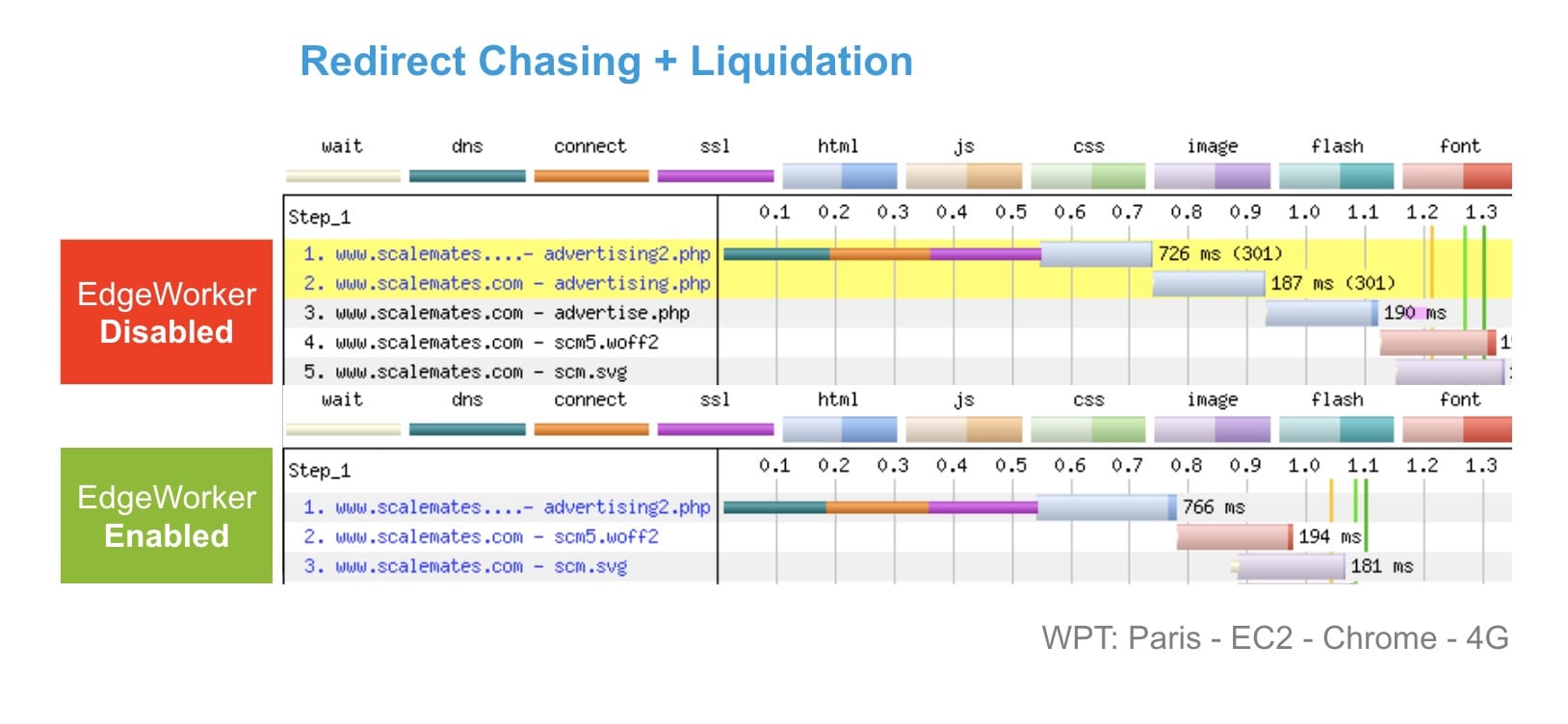

Results Synthetic

The impact on a redirect chain is visible in WebPageTest (4G Connection). At the top 2 redirects are needed before arriving to the correct link. The bottom shows how the 2 redirects were liquidated at the Edge (CDN).

If you have a critical look we could argue that the TTFB of the top one is 40ms faster than the bottom one? In this case we should look at TT(Meaningful)FB. The first relevant byte is more than 300ms faster, resulting in a better user experience. (Core Web Vitals: Largest Contentful Paint)

Summary

Redirects are notoriously bad for performance! Redirect Liquidation is a simple technique to improve the user experience for a large chunk of your vistors.