This year I’ve helped people measure performance using the sitespeed.io dashboard, doing synthetic testing with sitespeed.io/WebPageTest. I’ve learnt a few things along the way, and would like to share them with you.

It doesn’t matter if you use Open Source or proprietary software (well actually it does but that’s another story), my learnings are applicable to whatever tool you use.

Choosing URLs

You have the tool ready and want to start. But where to start? That’s one of the most common questions I get: Which URLs should I test? You can either choose the most viewed ones (get it from Google Analytics but make sure to get real traffic) or the most important ones. They are not always the same.

It’s usually easiest to choose the most important ones as long as you don’t involve more people than one. 🙂 A discussion about which URLs that you should test can take hours/days/weeks in a large organization. It’s better to just choose a few that you think are important for your users or for you business. You can always change later.

How many URLs?

I cannot say this enough: Start testing just a few URLs. Start with five and analyze and see that you get the metrics you want. And that you understand them. You will get the urge to test EVERYTHING when you get your graphs up and running. I do this mistake every time 🙂 Instead keep five URLs for a while. See how they are doing, and make sure you measure the things that are important.

How many times test to each URL?

How many runs depends on your site, and what you want to collect. Pat told us about how he is doing five runs when testing for Chrome. Hitting a URL 3-5 times is often ok when you want to fetch timing metrics, but increasing to 7-11 can give better values. Start low and if you see a lot of variations between runs, increase until you get some solid values.

Getting timing metrics is one thing, but it’s also important to collect how your page is built. You need to keep track of the size of pages, how many synchronously loaded javascript you have and so on. For that kind of information you only need one run per URL.

How often to test?

How often do you release? Make sure to test as often as you do a release so you can backtrack degradation. Do you have a content team that can change everything in production? And by everything I mean change Javascript, CSS, and add images. Test as often as possible then.

Crawling

You test your five pages but your site is larger than that right? And you want to find if performance degrades. I love crawling a site to find problematic pages. If your site is content heavy and editors has full access to your CMS whatever can and will happen. And you want to know that early. What I like about using synthetic testing to catch these things is that you can do it from the outside, without changing anything. You just add a job that crawl your site now and then.

Crawling a big international site a couple of years ago gave me this image:

Guess the size? 14.2 mb! If you don’t do it already, start crawling your site now.

Verify your metrics

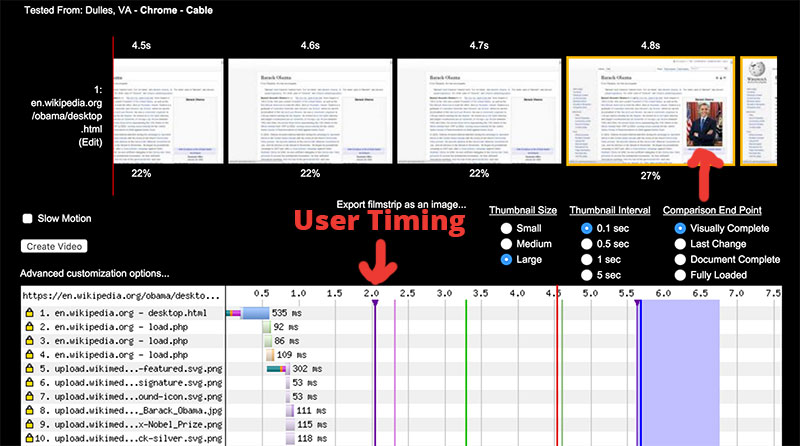

There are a couple of things that you easily forget: Always verify your metrics. The old classic is using first paint and then the background color changes early and it takes three seconds until something else happens. Always check your metrics. WebPageTest filmstrips are perfect for that.

At the Wikimedia Foundation we tried out User Timings to know when the first main image appears (Obama in this case), using the latest and greatest technique: setting a mark for the image onload event (clearing the mark first) and then a script tag right after, doing the exact same thing. This pattern makes sure we get the value that happens last, meaning when the image is displayed.

At first glance testing in Chrome the metrics looked ok but testing with Firefox and IE11 made us see that the metrics are kind of off. To be fair it didn’t work out in Chrome either when we went through all the runs we did. It sometimes looked like this:

It could be that some metrics works for other sites but not for yours so always try to verify your metrics.

Summary

- Start out by testing at most five URLs. When you know your site you can start testing more.

- First test each URL three times, and check if the metrics are consistent. If not increase the number of runs.

- How often you should run your tests depends on how often you deploy and change content in production. Make sure to test as often as you need to catch changes and being able to pinpoint them.

- Crawl the site to find problems on other URLs.